Compressed Sensing for Efficient Encoding of Dense 3D Meshes Using Model-Based Bayesian Learning

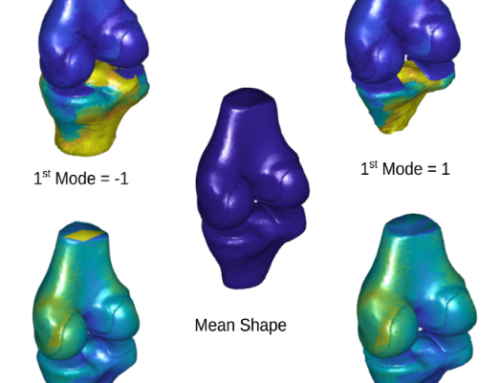

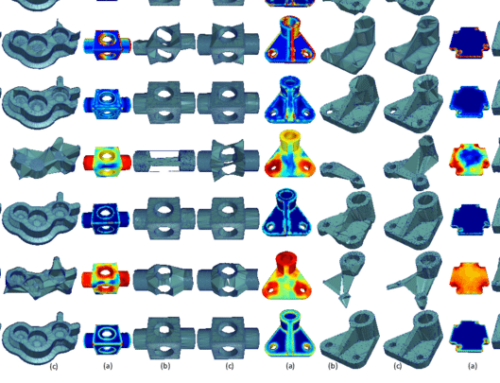

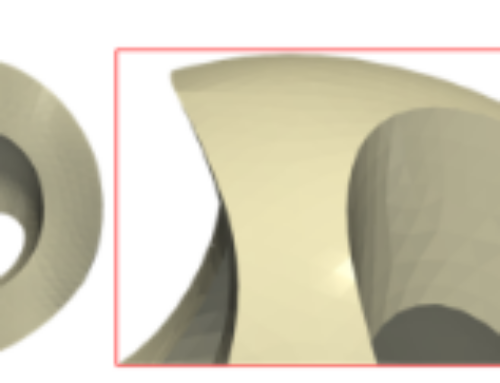

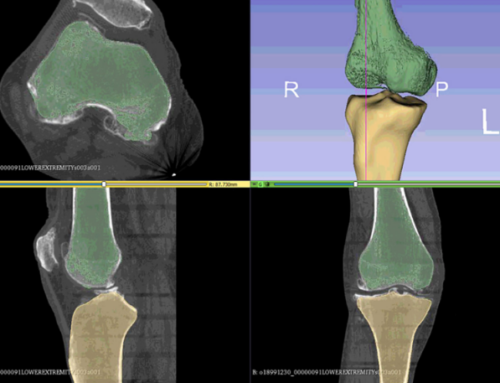

With the growing demand for easy and reliable generation of 3D models representing real-world or synthetic objects, new schemes for acquisition, storage, and transmission of 3D meshes are required. In principle, 3D meshes consist of vertex positions and vertex connectivity. Vertex position encoders are much more resource demanding than connectivity encoders, stressing the need for novel geometry compression schemes. The design of an accurate and efficient geometry compression system can be achieved by increasing the compression ratio without affecting the visual quality of the object and minimizing the computational complexity. In this paper, we present novel compression/reconstruction schemes that enable aggressive compression ratios, without significantly reducing the visual quality. The encoding is performed by simply executing additions/subtractions. The benefits of the proposed method become more apparent as the density of the meshes increases, while it provides a flexible framework to trade efficiency for reconstruction quality. We derive a novel Bayesian learning algorithm that models the most significant graph Fourier transform coefficients of each submesh, as a multivariate Gaussian distribution. Then we evaluate iteratively the distribution parameters using the expectation-maximization approach. To improve the performance of the proposed approach in highly under determined problems, we exploit the local smoothness of the partitioned surfaces. Extensive evaluation studies, carried out using a large collection of different 3D models, show that the proposed schemes, as compared to the state-of-the-art approaches, achieve competitive compression ratios, offering at the same time significantly lower encoding complexity.

A. S. Lalos, I. Nikolas, E. Vlachos and K.Moustakas, “Compressed sensing for efficient encoding of dense 3D Meshes using model based Bayesian learning”, IEEE Transactions on Multimedia, vol. 19, no. 1, pp. 41-53, Jan 2017. doi: 10.1109/TMM.2016.2605927